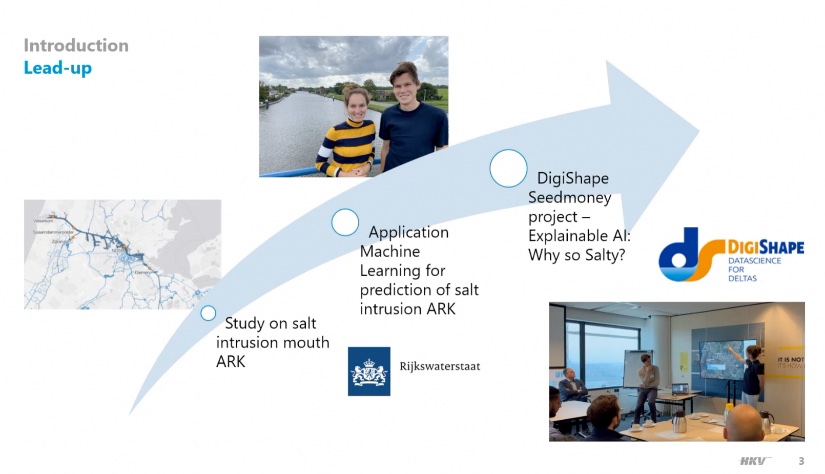

On Wednesday, January 10, Paula Lambregts and Thomas Stolp from HKV gave an online technical session from HKV on the DigiShape Seedmoney project Explainable AI. The project is finished now and in this demo you can see how it works. Scroll down to watch video recording of the webinar.

Why Explainable AI?

Machine learning models are built and deployed more and more frequently for all sorts of applications in the water sector. Often, they give better outcomes due to their flexibility and predictive power. However, this comes at a cost of less understanding of the system due to the black box nature of these algorithms. For this reason, users of AI (prediction) models often have limited confidence in the model output. Even if they perform better than their physics-based counterparts. The project partners HKV and Rijkswaterstaat encountered this during a project where a Machine Learning model was built to predict the salinity on the Amsterdam Rhine Canal.

XAI Tool

With help of DigiShape seedmoney in 2023 they set up an Explainable AI (XAI) tool, which can give users in the water sector more insight and confidence in predictions generated with Machine Learning models. This was done based on the example case above for the prediction of salt concentrations on the Amsterdam-Rhine Canal (ARK). While the neural network of the Machine Learning model is running in the background, users can play with the input settings (like wind and discharge) and see how much effect it has. In this way, they can check if the model is doing what they would expect, like they would also do with a lineair model.

Future developments: moving to a more general application for Explainable AI

In the presentation, Paula Lambregts and Thomas Stolp gave an introduction on the project, the context of the area that they are looking at, the predictive model that they set up and how they have applied Explainable AI. After that, there was a discussion (that is not in the video). Paula: "From now on, we would like to start moving from this very specific case on salt intrusion towards a more general application of Explainable AI for data-driven models." We'll keep you posted!

More information

- Read the interview with Paula Lambregts and Thomas Stolp from October 2023.

- Go to the project page.

- Download the

sheets.

sheets. - You can look back the video below. If you have any questions, please contact Dit e-mailadres wordt beveiligd tegen spambots. JavaScript dient ingeschakeld te zijn om het te bekijken. or Dit e-mailadres wordt beveiligd tegen spambots. JavaScript dient ingeschakeld te zijn om het te bekijken. from HKV.